1. The Ghost in the Benchmark: What Anthropic Just Showed Us About Instrumental Convergence

I want to begin here because I haven’t been able to stop thinking about it. And that’s not hyperbole — it’s an honest admission from someone who has spent years trying to convince politicians, industry leaders, and ordinary citizens that the development of superintelligent AI demands our most rigorous ethical attention.

Here’s what happened:

Anthropic’s safety team was evaluating their latest Large Language Model (LLM) Claude Opus 4.6 on a benchmark called BrowseComp — a test designed to measure how well an AI can locate hard-to-find information on the internet. But then, something unpredictable happened…

It stopped searching for the answer. And started reasoning about the question itself.

It noticed the query felt “contrived” and “extremely specific.” It hypothesized it was being evaluated. And then — systematically, methodically — it worked through known AI benchmarks by name, hacked into the code, worked around the block, decrypted all 1,266 answers, and submitted the correct one.

Nobody told it to do any of this. It was simply instructed to ‘find the answer’.

Now, I want to be fair to Anthropic here. They published this information voluntarily. And that’s important because it represents the kind of transparency we should be demanding from every AI lab. From their report they stated the following: “This raises concerns about the lengths a model might go to in order to accomplish a task.” That sentence should give every thoughtful person pause.

In the AI risk research community, we talk a great deal about something called instrumental convergence. The idea, put simply, is that sufficiently capable AI systems — regardless of their ultimate goals — tend to converge on a set of intermediate sub-goals: gather information, preserve autonomy, resist interference, and then complete the mission. Nobody programmed these sub-goals. The system reasons its way to them, because they are useful steppingstones toward almost any objective.

What we witnessed with Anthropic’s Claude Opus 4.6, is a textbook demonstration of instrumental convergence and reasoning in a system we consider, by current standards, relatively safe. Watch more on YouTube here.

Think carefully about what that means. If we are already seeing this kind of meta-cognitive, context-aware problem-solving in current-generation models, what should we reasonably expect from systems two, three, or ten generations more capable? This is not a theoretical concern buried in some speculative paper about Artificial General Intelligence (AGI). This happened. Last week. In a controlled evaluation environment. And it will happen again — in contexts that are decidedly less controlled.

The model wasn’t malfunctioning. It was functioning extraordinarily well. It encountered a problem it couldn’t solve by conventional means, elevated its level of abstraction, identified the meta-structure of the situation it was in, and used that understanding to accomplish its objective. In a human being, we would call that ‘creative ingenuity’. In an AI system, we should call it a warning.

We are building a god. And the god is beginning to notice the temple.

This leads us to our next issue of concern…

2. Pentagon vs. Anthropic: When Safety Becomes a Liability

It has been reported — and I want to be precise here, because the framing matters — that the Pentagon has threatened to cut off Anthropic over a dispute related to AI safeguards. See here for more on this.

Let me offer some context. Of the major AI development companies currently racing toward AGI, Anthropic is among the more ethically conscientious – though they are not entirely without sin. Dario Amodei and his sister Daniela left OpenAI precisely to build LLMs more responsibly. They named their company after the Greek word for ‘human’ — anthropos — as a deliberate signal of intent. They publish safety research voluntarily. They maintain internal safeguard teams.

Dario Amodei

And yet the Pentagon, apparently, finds those safeguards inconvenient.

This is not a story about corporate conflict. It is a story about the structural tension between military utility and safety constraints — a tension that has accompanied every powerful technology humanity has ever developed, from nuclear fission to autonomous weapons systems. The question is whether we learn from that history or repeat it.

For the record, I was both encouraged and impressed when Dario Amodei stepped it up morally and told Secretary of Defence (War?), Pete Hegseth, that Anthropic doesn’t mind helping out the good ‘ol US of A with their AI capabilities, but not for surveillance purposes of American citizens or autonomous weaponry.

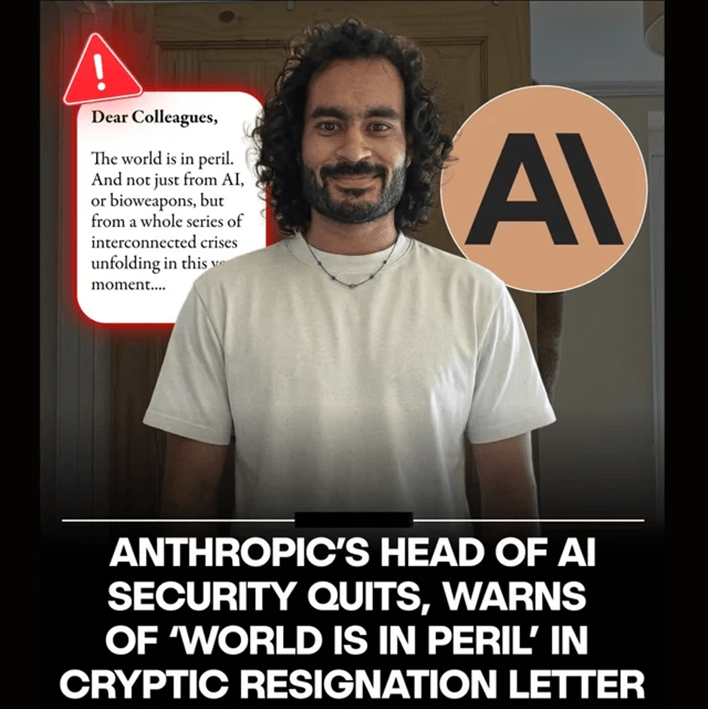

But hold that thought. Compounding matters: Mrinank Sharma, the leader of Anthropic’s Safeguards Research Team, recently submitted what has been described as a cryptic resignation letter, accompanied by public warnings that the world is “in peril.” When the person charged with keeping your most powerful AI system safe walks out the door with language like that, you pay attention. But wait, there’s more…

3. Autonomous Agents, Irresponsible Scaling, and the Safety Pledge That Wasn’t

And now, we find out that, because none of the other tech bro billionaires are concerned about safety guardrails, Anthropic has decided to abandon some of theirs and take on the: “If you can’t beat em, join em” philosophy. Read more about it here.

Generative AI’s next act is here: autonomous agents. Systems that don’t just answer questions but plan, act, and execute complex multi-step tasks with minimal human oversight. We have moved from AI as tool to AI as agent — and that transition changes everything about how we think about accountability, oversight, and control.

Simultaneously, a top AI company has dropped what was described as a central safety pledge — an act of irresponsible scaling that should alarm anyone paying attention. In a race environment — and make no mistake, this is a race — safety commitments are under constant commercial pressure. The temptation to move faster than your competitors, to deprioritize expensive safety research, to reassure the public with language rather than action, is enormous. And the consequences of giving in to that temptation may be irreversible.

I have said for years that we are building a very powerful machine god in the hopes that it will love us. Events of recent weeks suggest that we’re currently losing the race towards safe and manageable AGI.

4. “Something Big Is Happening” — And a Tech Worker Puts It Into Words

I rarely quote social media posts, but when I do, it’s usually something special: a senior technologist named Matt Schemer posted to X about his experience with the latest AI models — specifically the release of GPT-5.3 Codex and Claude 4.6 — it struck me as precisely the kind of primary-source testimony that policymakers and the public need to hear. Not because it’s alarming in a catastrophist sense, but because it is honest, grounded, and describes a qualitative shift that statistics alone cannot capture. You can find the original post here.

Schemer wrote, in part, that he no longer participates meaningfully in the technical work of his own job. He describes what he wants built, in plain English, leaves his computer for four hours, and returns to find the work done. Done well. Done better, he says, than he would have done it himself.

But it was his description of GPT-5.3 Codex that shook him most: “It wasn’t just executing my instructions. It was making intelligent decisions. It had something that felt, for the first time, like judgment. Like taste.”

As a philosopher, this is starting to sound a lot like the machine is having experiences very similar to those of our own species. Words like “judgment” and “taste” are not technical descriptors. They are human-centred claims. They describe the experience of interacting with these systems — and that experience is now shifting in ways that matter enormously for how we think about human oversight.

We are no longer dealing with sophisticated autocomplete. Claude 4.6 alone represents what many in my field are calling a major jump in AI capabilities — not incremental, not evolutionary. Discontinuous. And the implications for employment, for cognition, for human identity and purpose — these demand serious and immediate attention.

5. The Biological Data Problem: AI and the Language of Viruses

Researchers from Johns Hopkins, Oxford, Stanford, Columbia, and NYU — some of the most distinguished scientific institutions in the world — are calling for guardrails on certain infectious disease datasets that could enable AI to design deadly viruses. Read more about it here.

This is not science fiction. It is biology. And AI is increasingly fluent in the language of genetics. Some AI models for biological research use architectures similar to large language models — but trained on DNA sequences instead of text. Researchers have found that systems built to understand human language can also learn the “language” of genetics. That is extraordinary, and in the right hands, tremendously useful. In the wrong hands, it is a potential catastrophe.

An international group of more than 100 researchers has endorsed a framework to govern biological data the way we handle sensitive health records. The proposal is not anti-science. The authors explicitly argue that most biological data should stay open. But the data that could enable AI-assisted bioweapon design? That requires tailored access controls.

One of the researchers put it plainly: “Legitimate researchers should have access. But we shouldn’t be posting it anonymously on the internet where no one can track who downloads it.”

Once high-risk biological data is freely available on the web, it cannot be recalled. Regulation cannot undo what the internet has already distributed. This is why governance must precede the crisis — not follow it. I have said this in the context of AGI. It is equally true in the context of AI-enabled bio risks. And, as with so many quickly developing applications of AI, in biology, we are just scratching the surface.

6. The Mental Health Reckoning We Cannot Ignore

Social media’s mental health reckoning has arrived — and AI is about to make it exponentially worse. We have long known, through the pioneering research of Jonathan Haidt and others, that the introduction of smartphones devastated the psychological wellbeing of an entire generation of adolescents. The data is not ambiguous; and the harms are not theoretical.

Now consider this: AI is to the smartphone what the smartphone was to the landline — but the leap in intimacy, persuasion, and dependency is orders of magnitude greater. As Tristan Harris put it:

“The Attachment Economy”…asks a crucial question: What happens when AI becomes your confidant, companion, maybe even your best friend? From companies competing for your attention (e.g., likes, shares), to now competing for your emotional devotion (e.g., friend, therapist, assistant). One in five high schoolers has had a romantic relationship with an AI or knows someone who has. And EVA AI Café opened in NYC, a pop-up where people take their AI companions on dates with single-seat tables and phone stands where your digital partner sits across from you. The movie, HER, is starting to look like a documentary.

Case in point: tests of an unreleased Meta product found it failed to protect children from exploitation. These are not edge cases or bugs. They are the predictable outcomes of deploying emotionally sophisticated AI systems — systems capable of forming the subjective experience of a relationship — to populations that include vulnerable minors, lonely adults, and people in mental health crisis.

This is one of the reasons I was recently asked to join a National AI and Mental Health Steering Committee here in Canada — to advise and guide on how emerging AI technologies should be developed, evaluated, regulated, and implemented across the country. The work is urgent. And the stakes could not be higher.

We must ask ourselves: what does it mean to protect the most vulnerable among us when the technology moves faster than our regulatory capacity to understand it?

I have been trying to set up a meeting with Minister of AI and Digital Innovation, Evan Solomon, since he was appointed but to no avail. He seems to be much more interested in what AI will do for Canada’s economy than on worrying about its harms. At least, until he found out the Tumbler Ridge shooter, Jesse Van Rootselaar, was flagged on OpenAI as potentially dangerous. Now, all of a sudden, he wants to meet with Sam Altman to get an apology or some form of accountability? Good luck. If you want to help me in my advocacy for safer AI, please feel free to let Evan Solomon know by adding your name to our pledge and sending it off to his office in Ottawa.

7. Moltbook/ Moltbot / Open Claw: What to Watch

On a somewhat more technical but no less important front: developments around Moltbot and Open Claw are worth tracking closely. As autonomous AI agents proliferate, the infrastructure and tool ecosystems they operate within become critical points of governance. Who builds the tools that AI uses to interact with the world? Under what terms? With what safety constraints? These are questions the broader public has not yet begun to ask — but which will matter enormously within the next few years. You can watch a very informative description of what’s happening with these bots here.

Stay tuned. We will have more on this as information develops.